12. SAM Audio for instrument separation

Prologue

Meta has yet again released a game-changing open-source model - this time an audio-segmentation or stem extractor model in the segment-anything-model (SAM) family, named SAM Audio. As a long time fan of the SAM models, I couldn’t wait to try it out.

This month’s project will be quite dissimilar to the other projects, as I am not really building anything, but rather testing stuff. In particular, I will test it’s ability to extract indiviaul instruments/components of songs, such as vocals, piano, etc. This was an opportunity for me to listen to some great music, while also testing if the model lives up to the hype.

SAM Audio

SAM Audio is a foundation model that supports multimodal prompting, similar to SAM 3. It can use text to describe what to segment, clicks to describe where to segment (if using video), and intervals to describe when to segment. It’s not necessary to use all prompt types simultanously, even though it’s definitely an option.

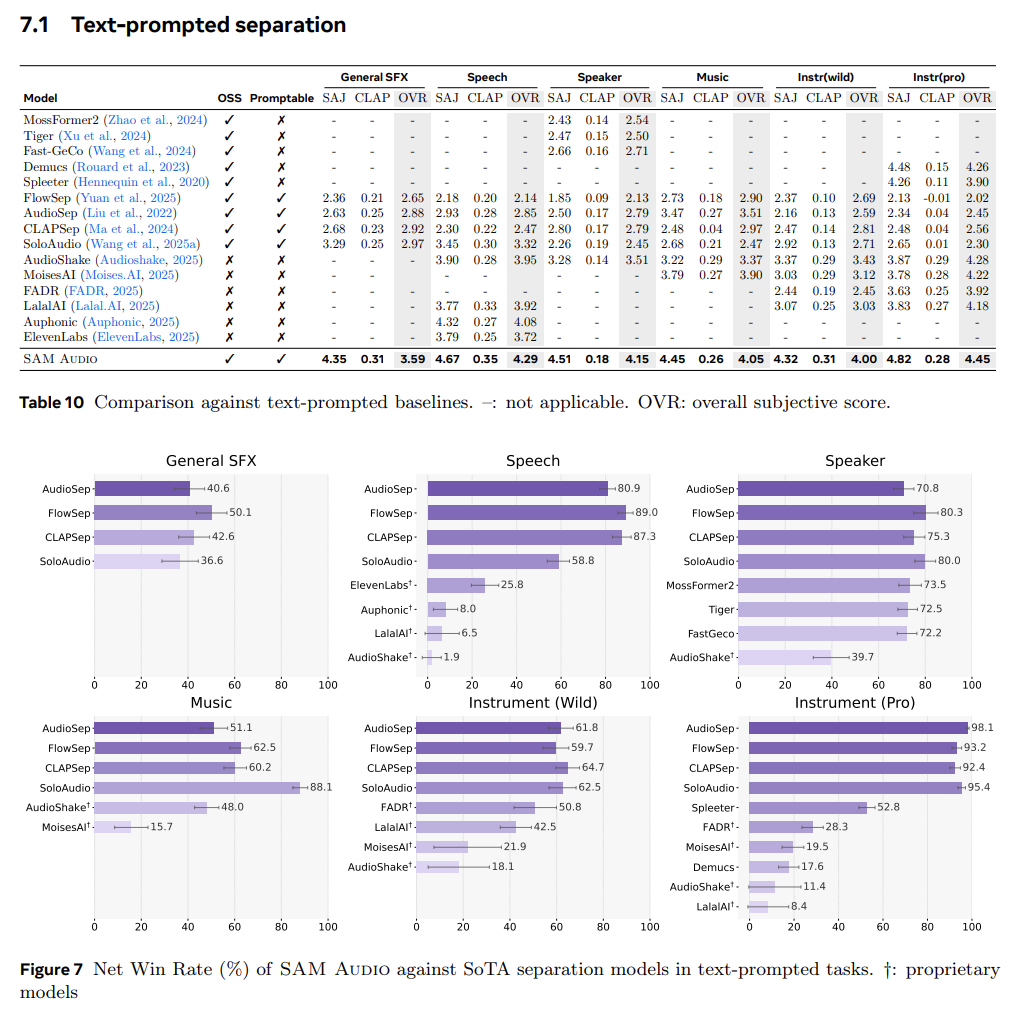

The model is benchmarked on 6 categories of audio: General Sound Effects, Speech, Speaker, Music, Instrument (Wild), Instrument (Pro) (more details in the paper). For text-only prompts, they claim to perform better on all categories for all competitive models:

This means that SAM Audio is definitely state-of-the-art (SOTA), and that this is the best performance we can expect for stem extraction tasks.

Setup

There exists an online demo where you can test it out for yourself. Unfortunately, I had no luck with uploading my own content, and was stuck with the default examples. So instead, I choose to run the model locally. Well, technically it was on my university’s compute cluster, but that is still relatively local as I live near campus 🙃.

But just as I had feared, setting this up was a nightmare, due to a lackluster documentation and an incomplete description of the packages needed to run it (just like all the other SAM models). Anyway, I got it to work. Many golden nuggets can be found in this blog post. I got the large version of the model running with half-precision, but as I eventually came to understand, using half-precision did a lot of harm. Instead, I used the base/medium version with full precision, where I could run inference on 30 second chunks at a time.

Results

Please beware that I have cherrypicked the best results for the sake of enjoyment, which is not very scientific. We can also expect better results if we are to utilize multiple prompting types such as span (time interval). Anyway, let’s get to it:

🗣️ Voice

I imagine that the human voice is highly overrepresented in the dataset, compared to the other instruments, as voice is seen many other places than in music data. As you might have guessed, the model also happens to be quite good at extracting vocals, meaning that I am one step closer to recreate this masterpiece of a video:

This is my attempt:

Greased Lightning - John Travolta

Processed:

Original:

A cleaner example featuring Frank Sinatra:

Fly Me to the Moon - Frank Sinatra

Processed:

Original:

And here is an example of a duet:

Die With a Smile - Bruno Mars / Lady Gaga

Processed:

Original:

🎹 Piano

The tonal range of a piano spans from quite deep to quite high, making it harder to distingish among the many other overlapping frequencies. The bass should in theory be very easy to isolate for this exact reason, as it only lives within low frequencies. Here is an example of piano that sounds reasonably well, even though it misses a few notes:

All of the Lights - Kanye West

Processed:

Original:

Have you ever wondered how Piano Man would sound without the actual piano? If we can isolate a sound, we can also play the full audio with the sound “subtracting”. Well, introducing “Man”:

(Piano) Man - Billy Joel

Processed:

Original:

🎸 Guitar

The guitar is a versitile instrument just like the piano, but doesn’t have the same range. I hypothesize that this is an important factor when it comes to extracting guitar sounds, but who knows?

Let’s start off with the accoustic guitar:

House of the Rising Sun - The Animals

Processed:

Original:

Die With a Smile - Bruno Mars / Lady Gaga

Processed:

Original:

You might have noticed that the second example sounds like the quality has decreased, even when the instrument has actually been recovered. I wonder if all examples are this way, but it’s just simply easier to hear to now because the song is more delicate/slow?

Even though the accoustic guitar has a limited range, it’s quite normal to have 2 guitars in rock/metal bands; a lead gutiar (higher frequency) and a rhytmn guitar (low-mid range). Counting in the bass guitar, we get an even lower frequency guitar.

Even as humans it might be hard to distinguish between a low frequency guitar and a bass. The result of this being that the bass is often included when we choose to extract the guitar sound. Another explanation could be that bass is technically a guitar, meaning that it should be included.

Enter Sandman - Metallica

Processed:

Original:

Saturday Night's Alright - Elton John

Processed:

Original:

🥁 Percussion

There is not much to say about percussions (this includes drums). As they appear in predictable patterns, and usually lie in the very low or very high frequencies, they are easy to extract. Here are some examples:

Dance the Night - Dua Lipa

Processed:

Original:

Enter Sandman - Metallica

Processed:

Original:

Fly Me to the Moon - Frank Sinatra

Processed:

Original:

🎺 Brass

To my dismay, it was very hard for the model to consistently extract brass-instruments. I have even tried multiple synonymous prompts, such as trumpet, trombone, big band, brass. It is very common for the model to include other non-brass sounds, including vocals. These were the best (very cherry-picked) results I could get:

All of the Lights - Kanye West

Processed:

Original:

Fly Me to the Moon - Frank Sinatra

Processed:

Original:

🎻 Strings

Unfortunately, the model also underperforms on string-instruments and has the exact same artifacts as the brass-instruments.

The Wonder of You - Elvis Presley

Processed:

Original:

Dance the Night - Dua Lipa

Processed:

Original:

You have a great voice Dua Lipa, but unless you are a cello, please be quiet.

Conclusion

I am quite impressed by the model, even though it fails to separate certain instruments. In the categories that are separated well, it performs decently well. I can imagine this model being used in many different ways. Karaoke is an obvious one. Curating audio datasets or doing offline noise removal would make sense as well. I hope to see some cool applications of this in the future!